Virtual production is revolutionizing the filmmaking process and more and more film and television productions are relying on its workflows which offer filmmakers greater control over when and how they envisage a scene. This increased interest is seeing new creative and innovative roles for real-time artists emerge throughout all stages of production.

In this article, we’ll explore the virtual production process in more detail, looking at a typical workflow and how it relates to the role of a real-time 3D artist.

Where are virtual production workflows used?

Virtual production is a collaborative process that marries the physical world of filmmaking (actors, props, etc.) with the digital world (lighting, VFX, etc.) in one process using real-time technology.

Virtual production workflows and techniques are commonly employed in:

-

Film - real-time artists for film and TV are required both on set during production and for many of the pre and post-production stages. For now, it's usually only bigger-budget films that can afford to use virtual production workflows during the filming stage (e.g., an LED stage Volume, motion capture, etc.). However, even smaller-budget films and television series can employ the many affordable pre and post-production workflows available, such as previs and postvis.

-

Video games - video games popularized the use of real-time technology (using a game engine), which was then adopted by filmmakers and other industries. Now, real-time artists for video games are adopting film-centric virtual production techniques. For example, games such as Call of Duty: Advanced Warfare, Halo 4, and Far Cry employed motion capture technology, Technoprops, from the virtual production division of Industrial Light and Magic (IML).

Technoprops' Academy Award-winning Head-Mounted Camera unit. (Image: ILM)

Technoprops' Academy Award-winning Head-Mounted Camera unit. (Image: ILM)

-

Commercial industries - commercial virtual production covers many industries and is used for various purposes. Real-time 3D artists for commercial industries work in broadcasting, engineering, architecture, and product design, to name a few, and utilize many of virtual production's innovative workflows. One example is how the Weather Channel showed the effects of Hurricane Florence by putting the reporter in front of a green screen with digital scenes displayed on viewers' screens illustrating the water surge levels.

What is a virtual production workflow?

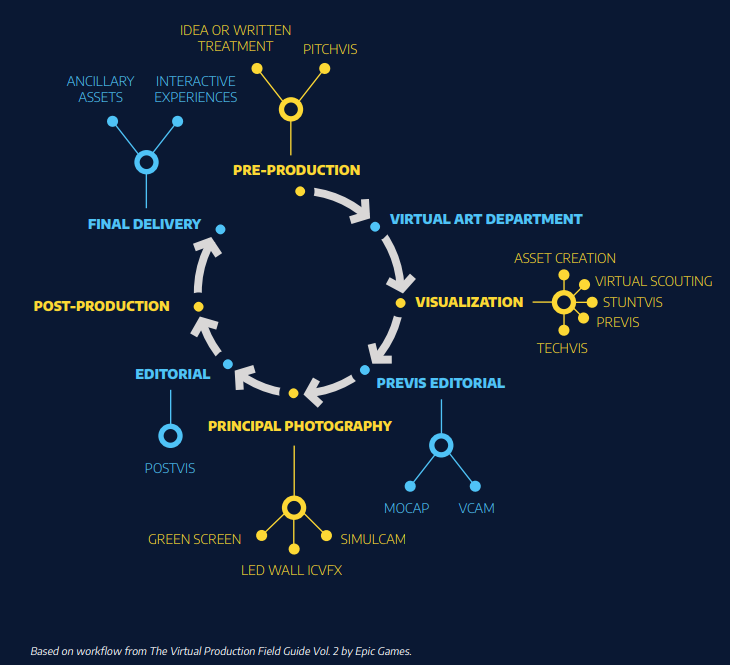

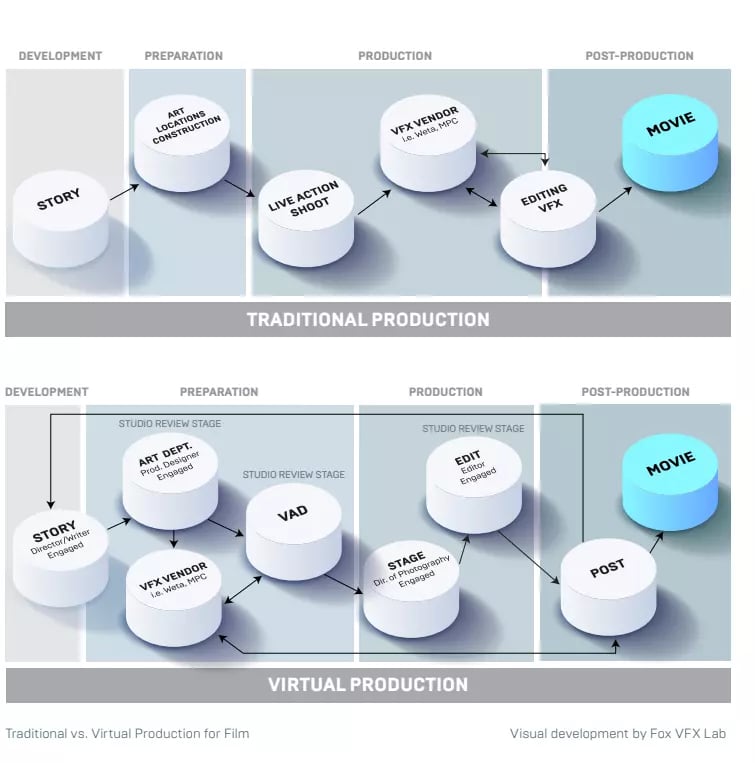

At first glance, a virtual production workflow looks more complicated than a traditional one. However, while it may have more steps, it’s actually much more efficient and artist-friendly.

An example of a typical virtual production workflow (Source: Epic Games). Learn more about virtual production workflows by taking one of CG Spectrum's comprehensive Unreal Engine-authorized virtual production courses.

Here are (some of) the simplified steps of a typical virtual production workflow including what is required of a real-time 3D artist:

1. Pre-production (Development) - This is when the idea for the film or TV series is first visualized for approval from investors, producers, and studios. Real-time 3D artists convey the story and tone of a production by creating digital scenes known as pitchvis (like a preliminary trailer), visualizing the filmmakers’ creative intent. Pitchvis has been effective in greenlighting Hollywood blockbusters such as Godzilla (2014), Men in Black 3, and World War Z.

Pitchvis for World War Z, which secured the film's funding.

2. Virtual Art Department (VAD) - The virtual art department begins creating real-time assets that will be used in a film, from rough 3D prototypes to camera-ready props, characters, and environments. This team helps determine which assets will be built digitally as part of the virtual world and displayed on the Volume, which will be live-action props and set pieces, and which assets will need to be created in post-production (at a VFX studio). The VAD works very closely with previs artists and visual effects teams to ensure the production’s priorities are met and that principal photography and other stages go smoothly.

There is a difference on the set when we’re shooting virtual assets that look good. Everybody gets excited, and people take it more seriously and put more work into every single shot.”

Ben Grossmann, Oscar and Emmy Award-winning Visual Effects Supervisor

3. Visualization - Filmmakers are generally most familiar with this part of the virtual production workflow. Real-time 3D artists generate digital imagery using a game engine to convey a filmmaker's vision of a sequence or a shot.

There are several types of visualizations used in virtual production workflows, including:

-

Asset creation: When building a virtual world, everything needs to be created digitally in 3D using modeling software such as Maya and a game engine like Unreal Engine. These 3D models are props, sets, and characters and help to make up the virtual worlds projected on the LED screen. Mocap cameras (simulcams) also required assets that are superimposed over the live-action footage on video screens, assisting the crew with framing and timings.

-

Virtual Scouting: This is the creation of a digital version of proposed sets and locations using movable digital props and virtual cameras with lenses. Crew members can interact with and explore a set on a computer or through a head-mounted virtual reality display. It helps with planning shots and defining set builds.

-

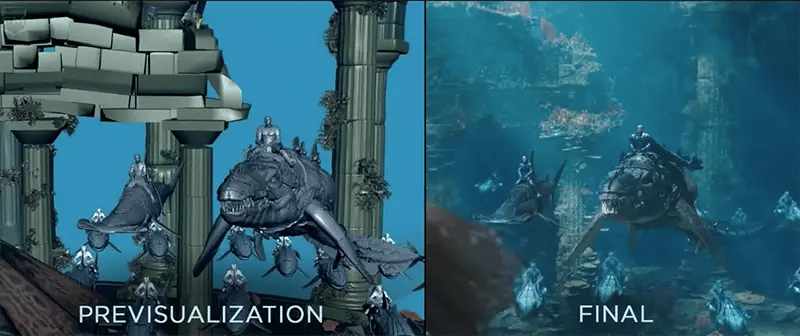

Previs: Think of a previs as a virtual sandbox where you can explore story ideas for lighting, camera angles/movement, stage direction, and editing before you start the actual production.

Example of Aquaman previs vs. the final shot (Image: WarnerMedia)

-

Techvis: Combining virtual elements and real-world equipment as well as captured footage with virtual assets, techvis helps to plan shots, particularly for use by FX artists. Based on physics and camera data, it helps filmmakers validate plausible camera lenses, movements, and placement to ensure they will work in post-production.

-

Stuntvis: As its name suggests, these visualizations help plan stunts using the real-world physics simulations available in real-time engines. Stuntvis includes details of scene blocking, choreography, stunt testing, set design, prop and weapon development, and camera placement and movement. It helps test stunts before live actors or stunt cast members attempt them in real life.

4. Principal Photography (Production) - This is when your virtual worlds will be projected on LED screens surrounding actors, props, and smaller set pieces in the foreground.

The LED screens that make up The Volume generate more realistic lighting and reflections, minimizing the need for large, complicated lighting set-ups on set. Camera tracking technology then adjusts the virtual scene behind the actor based on the live-action cameras, creating parallax (helping the virtual world and the physical world appear to exist in the same space).

The making of Aquaman and the many ways virtual production was used during the principal photography — from previs to motion capture to LED screens (also demonstrating the non-linear nature and versatility of virtual production).

Real-time artists and the virtual production team can make live updates to the virtual worlds to optimize lighting, camera angles/movement, and better integrate digital assets with live-action props and set pieces. Other virtual production techniques such as greenscreen/bluescreen and simulcam are also used during this stage.

5. Post-production - Although virtual production allows for a lot of the visual effects to take place during the pre-production and production phases, additional visual effects—3D models, compositing, FX, etc.—are commonly still required.

During post-production, if any placeholders were used during filming (like using human stand-ins for digitally-created animals or creatures), VFX artists, like 3D modelers, FX artists, and compositors, would create, integrate, and render these elements into the final footage.

Postvis is another form of visualization used during post-production and often involves integrating the live-action footage with rough VFX. Its design is similar to previs but is used more for editorial as shot placeholders (until a more final version can be added to the cut) after filming has already taken place. It is also used to brief VFX teams on the timings, staging, etc., of a full or partial CG shot.

6. Final delivery - Once all team members have completed their parts, the final version of the film is rendered and delivered to audiences to enjoy!

Virtual Production vs. traditional filmmaking

The virtual production workflow is more front-loaded for real-time teams, with more asset creation and VFX work happening before and during principal photography. Virtual production provides more creative flexibility earlier in the filmmaking process and produces a much higher end-product. Its non-linear, more collaborative steps allow for greater flexibility and opportunities.

The differences between virtual production and traditional film production (Source: Fox VFX Lab)

Here are a few ways virtual production differs and is more advantageous than traditional filmmaking:

-

Visual effects are started during pre-production and principal photography rather than waiting until post-production. This allows for better integration between CG and live-action elements and less work is required in post-production.

-

Scenes and sets are created digitally rather than requiring on-location shooting or complex sets. Along with saving time and money, virtual sets also help maintain continuity and allow for hassle-free pick-ups and reshoots.

-

Concepts and assets can be mocked-up much more quickly and can be edited in real-time rather than waiting for post-production. This gives filmmakers more creative freedom and a clear way of conveying their vision to the cast and crew.

-

Lighting is managed through the LED screens on the Volume stage rather than with extensive on-set lighting, helping better integrate the live-action with the virtual world and meaning less work is required on set and in post-production.

-

Assets such as 3D models are cross-compatible and can be digitally repurposed ensuring continuity and, again, saving productions time and money.

Read more about the top reasons to choose virtual production for filmmaking.

What jobs can I get in virtual production as a real-time 3D artist?

You can become a real-time 3D artist for film, games, or commercial industries with new jobs emerging all the time. In film, a real-time 3D artist has many interesting career paths to choose from, including (but not limited to):

-

Visualization artist

-

Virtual production supervisor

-

Live compositing artist

-

LED wall cinematographer

-

Real-time technical artist

“Technical artists are one of the most overlooked skillsets when it comes to almost any real-time production. The opportunities within this space are still largely untapped.”

Simon Warwick, CG Spectrum's Virtual Production Department Head

Learn more about virtual production workflows and prepare yourself for a career as a real-time artist.

Global demand for real-time 3D artists is expanding 10 percent faster than the overall job market, and demand for real-time skills has outpaced information technology skills by 50 percent! (Source: Burning Glass Technologies).

If you're ready to take the next step, enroll in our real-time 3D and virtual production courses to start a rich and rewarding journey in virtual production.